Last Updated on March 16, 2026 by Admin

Quick Answer: The top 25 technology trends for 2026 include Agentic AI, Generative AI at Enterprise Scale, Physical AI, Quantum Computing, Preemptive Cybersecurity, 5G/6G, Edge AI, Digital Twins, Solid-State Batteries, Web3, and 15 more. Here’s the complete list with career opportunities, market data, and learning resources for each trend.

ConstructionCareerHub App is LIVE — built ONLY for construction careers. Don’t apply with a weak resume.

Get ATS-ready Resume Lab + Interview Copilot + Campus Placement Prep (resume screening, skill gaps, interview readiness) — in minutes & Other advanced features.

Explore Smarter Construction Career Tools →Quick check. Big impact. Start now.

📌 This guide is organized into 5 sections: AI & Automation · Connectivity & Infrastructure · Security & Trust · Physical World · Future Technologies

Technology in 2026 is no longer about experimentation—it is about execution. According to Deloitte’s Tech Trends 2026 report, organizations that master this year’s emerging technologies will define competitive advantage for the next decade. The global AI market alone is projected to reach $312 billion in 2026, growing at a staggering 27.7% annually.

Whether you are a construction professional exploring digital construction management, a tech enthusiast, or a career switcher looking to build future-proof skills, understanding these technology trends is essential. This comprehensive guide covers the 25 most impactful technologies shaping industries worldwide, complete with career opportunities and learning resources.

Quick Navigation: This guide is organized into five categories: AI & Automation, Connectivity & Infrastructure, Security & Trust, Physical World Tech, and Future Technologies. Scroll to any section or use the table of contents.

Table of Contents

At-a-Glance: Top 25 Technology Trends 2026 — Comparison Table

The table below provides a unique, proprietary summary of all 25 trends with market size, career demand, and learning curve — data that AI Overviews cannot easily replicate.

| # | Technology Trend | Category | 2026 Market Size | Career Demand | Learning Curve | Construction Relevance |

|---|---|---|---|---|---|---|

| 1 | Agentic AI | AI & Automation | $312B (total AI) | 🔥 Extremely High | ⭐⭐⭐⭐ | Project Automation |

| 2 | Generative AI (Enterprise) | AI & Automation | $66B+ | 🔥 Extremely High | ⭐⭐⭐ | Design & Docs |

| 3 | Domain-Specific LLMs | AI & Automation | Emerging | 🔥 High | ⭐⭐⭐⭐⭐ | Spec & Contract AI |

| 4 | Robotic Process Automation | AI & Automation | $22B | 📈 Growing | ⭐⭐⭐ | Procurement / ERP |

| 5 | Computer Vision | AI & Automation | $19.7B | 🔥 High | ⭐⭐⭐⭐ | Site Safety / QC |

| 6 | 5G / Early 6G | Connectivity | $667B (5G) | 📈 Growing | ⭐⭐⭐ | Connected Sites |

| 7 | IoT Expansion | Connectivity | $1.1T | 📈 Growing | ⭐⭐⭐ | Equipment Tracking |

| 8 | Cloud Computing 3.0 | Connectivity | $679B | 🔥 Extremely High | ⭐⭐⭐ | BIM / Project Data |

| 9 | Edge Computing / Edge AI | Connectivity | $61B | 🔥 High | ⭐⭐⭐⭐ | Site Real-Time AI |

| 10 | Big Data Analytics | Connectivity | $103B | 🔥 High | ⭐⭐⭐ | Cost & Schedule Analytics |

| 11 | Preemptive Cybersecurity | Security & Trust | $93.75B by 2030 | 🔥 Extremely High | ⭐⭐⭐⭐ | Data & IP Protection |

| 12 | Blockchain & Digital Provenance | Security & Trust | $20B | 📈 Growing | ⭐⭐⭐⭐ | Supply Chain Trust |

| 13 | Biometric Authentication | Security & Trust | $68B | 📈 Growing | ⭐⭐ | Access Control / Time |

| 14 | Physical AI & Embodied Intelligence | Physical World | $38B (robotics) | 🔥 High | ⭐⭐⭐⭐⭐ | Autonomous Equipment |

| 15 | Autonomous Vehicles | Physical World | $172B by 2030 | 📈 Growing | ⭐⭐⭐⭐⭐ | Logistics / Earthmoving |

| 16 | Drones / UAVs | Physical World | $54B | 🔥 High | ⭐⭐ | Surveying / Inspection |

| 17 | Digital Twin Technology | Physical World | $73B by 2027 | 🔥 High | ⭐⭐⭐⭐ | Facility & Project Mgmt |

| 18 | VR / AR / XR | Physical World | $250B by 2028 | 📈 Growing | ⭐⭐⭐ | Design Viz / Site Training |

| 19 | Smart Cities & Smart Homes | Physical World | $873B | 📈 Growing | ⭐⭐⭐ | Urban Infrastructure |

| 20 | Solid-State Batteries | Physical World | $8B by 2028 | 📈 Emerging | ⭐⭐⭐⭐ | EV Construction Equipment |

| 21 | Quantum Computing | Future Technologies | $1.3B | 📈 Emerging | ⭐⭐⭐⭐⭐ | Structural Optimization |

| 22 | Natural Language Processing | Future Technologies | $43B | 🔥 High | ⭐⭐⭐ | Contract & Report AI |

| 23 | Genomics & Precision Medicine | Future Technologies | $94B | 📈 Growing | ⭐⭐⭐⭐⭐ | Worker Health Programs |

| 24 | Synthetic Biology | Future Technologies | $28B | 📈 Emerging | ⭐⭐⭐⭐⭐ | Bio-based Materials |

| 25 | Web3 & Metaverse | Future Technologies | $800B by 2030 | 📈 Growing | ⭐⭐⭐ | Virtual Collaboration |

Career Demand: 🔥 Extremely High / High = active hiring surge in 2026 | 📈 Growing = steady upward trajectory | ⭐ = Learning Curve (1=easiest, 5=hardest) | Source: Gartner, Deloitte, IDC, MarketsandMarkets 2025–2026

Section 1: Artificial Intelligence & Automation Technologies

1. Agentic AI and Multi-Agent Systems

The biggest AI shift of 2026 is the move from chatbots to autonomous agents. Agentic AI refers to AI systems that can independently plan, execute, and refine workflows without constant human oversight. According to Gartner’s 2026 Strategic Technology Trends, multi-agent systems now allow modular AI agents to collaborate on complex tasks, dramatically improving automation and scalability.

However, implementation remains a challenge. Only 11% of organizations have agents in production, despite 38% running pilots. Gartner predicts that 40% of agentic AI projects will fail by 2027—not due to technology limitations, but because organizations are automating broken processes rather than redesigning them.

Career Opportunity: AI Agent Developers, Workflow Automation Specialists, and AI Orchestration Engineers are among the fastest-growing roles. Learn the fundamentals with Machine Learning Specialization.

2. Generative AI at Enterprise Scale

Generative AI has moved beyond experimentation into production deployment. 65% of organizations now use generative AI in at least one business function, according to recent industry surveys. The technology is transforming content creation, software development, product design, and decision-making processes.

IBM’s research indicates that AI startups are scaling from $1 million to $30 million in revenue five times faster than traditional SaaS companies did. This acceleration reflects how generative AI reduces time-to-value for new products and services.

Construction Industry Application: Generative AI is revolutionizing AI in construction through automated design generation, project planning optimization, and predictive maintenance scheduling. Learn more with Building AI-Powered Chatbots.

3. Domain-Specific Large Language Models

While general-purpose AI models grab headlines, the real enterprise value in 2026 comes from domain-specific language models. These specialized AI systems deliver higher accuracy and compliance for industry-specific use cases, from legal document analysis to medical diagnosis assistance.

The deep learning segment dominates the AI market with a 25.3% revenue share in 2025, and this specialization trend is accelerating. Industries including construction, healthcare, finance, and manufacturing are deploying custom AI models trained on domain-specific data.

4. Robotic Process Automation (RPA)

Robotic Process Automation continues to mature, now integrating with AI to handle increasingly complex workflows. RPA software bots automate repetitive, rule-based tasks like data entry, validation, and retrieval across enterprise systems.

The key benefits of RPA include increased efficiency, reduced human error, 24/7 operation capability, and significant cost savings. RPA integrates seamlessly with ERP systems for construction companies, automating procurement, inventory management, and financial reporting.

Skills to Develop: Master RPA platforms with the Robotic Process Automation Specialization.

5. Computer Vision and Image Intelligence

Computer vision technology enables machines to interpret and understand visual information from the world. Applications range from quality control in manufacturing to safety monitoring on construction sites, autonomous vehicle navigation, and medical imaging analysis.

Deep learning techniques have dramatically improved computer vision accuracy. The technology now powers facial recognition, object detection, image segmentation, and 3D reconstruction across virtually every industry.

Section 2: Connectivity & Infrastructure Technologies

6. 5G Maturation and Early 6G Development

While 5G networks continue their global rollout, early 6G trials are demonstrating extreme bandwidth and near-instant data transmission capabilities. According to industry research, 6G will integrate AI, sensing, and cyber-physical systems to merge digital and physical worlds.

Key Insight: Despite 6G hype, LTE will still account for 93% of all cellular IoT module shipments in 2026. This is because LTE strikes an optimal balance between cost, performance, and power efficiency for most IoT applications.

Training Resource: Understand next-generation connectivity with 5G Training for Business Professionals.

7. Internet of Things (IoT) Expansion

The Internet of Things connects physical devices, vehicles, buildings, and equipment embedded with electronics, sensors, and connectivity. These connected devices collect and share data to improve efficiency, enable predictive maintenance, and create smarter operations.

Construction Application: IoT is transforming construction sites through equipment tracking, worker safety monitoring, and environmental sensing. Learn how in our comprehensive guide to IoT in the Construction Industry. Also explore Connected Construction Sites for implementation strategies.

8. Cloud Computing 3.0 and AI Infrastructure

Cloud computing is entering its next evolution. The infrastructure built for cloud-first strategies cannot handle AI economics, requiring organizations to rebuild their technology foundations. Microsoft has committed $17.5 billion to new data centers in India alone, while Amazon has pledged $35 billion and Google $15 billion in partnership with Indian conglomerates.

Cloud services divide into three categories: Infrastructure-as-a-Service (IaaS), Platform-as-a-Service (PaaS), and Software-as-a-Service (SaaS). Each enables organizations to scale computing resources on-demand while paying only for what they use.

Build Cloud Skills: Start with the Cloud Computing Specialization.

9. Edge Computing and Edge AI

Edge computing brings computation and data storage closer to devices rather than centralizing in distant data centers. This reduces latency, improves responsiveness, and enables real-time processing for applications like autonomous vehicles, industrial IoT, and augmented reality.

In 2026, edge AI allows devices to process data locally instead of relying on the cloud. This improves privacy, reduces bandwidth demands, and enables AI capabilities in smartphones, wearables, and smart equipment.

Foundation Course: Build your computing fundamentals with the Computer Fundamentals Specialization.

10. Big Data Analytics and Data Intelligence

Big Data technology enables organizations to collect, store, process, and analyze massive datasets from diverse sources including social media, IoT devices, and business transactions. Analytics transforms this data into actionable insights for better decision-making.

Applications span customer analytics, fraud detection, predictive maintenance, and supply chain optimization. For those interested in Data Science careers, this remains one of the highest-demand skill areas.

Career Development: Complete the Google Data Analytics Professional Certificate to build job-ready skills.

Section 3: Security & Trust Technologies

11. Preemptive Cybersecurity

Cybersecurity in 2026 shifts from reactive to proactive, using AI to block threats before they strike. Gartner identifies preemptive cybersecurity as a top strategic trend, emphasizing the need to protect reputation, ensure compliance, and maintain stakeholder confidence.

The AI cybersecurity market is growing at 24.3% CAGR, projected to reach $93.75 billion by 2030. Over 90% of cybersecurity professionals are concerned about hackers leveraging AI in cyberattacks, making advanced defense capabilities essential.

Launch Your Career: Start with the Introduction to Cybersecurity Nanodegree on Udacity.

12. Blockchain and Digital Provenance

Blockchain technology provides a decentralized, tamper-proof ledger for secure transactions. In 2026, digital provenance becomes essential for verifying the origin and integrity of software, data, and AI-generated content.

Applications extend beyond cryptocurrency to supply chain management, smart contracts, identity verification, digital voting, and real estate transactions. The technology enables trust and compliance in an increasingly digital economy.

Deep Dive: Explore the Blockchain Revolution Specialization for enterprise applications.

13. Biometric Authentication

Biometrics uses unique physical or behavioral characteristics for identification, including fingerprints, facial recognition, iris scans, and voice recognition. These systems provide more secure and convenient authentication than traditional passwords.

Applications include physical access control, time and attendance systems, border security, and law enforcement. Robust data security and privacy protection policies are essential for any biometric implementation.

Section 4: Physical World Technologies

14. Physical AI and Embodied Intelligence

Physical AI brings intelligence into the real world, powering robots, drones, and smart equipment for operational impact. According to Gartner, this represents a fundamental shift where intelligence is no longer confined to screens but is embodied, autonomous, and solving real problems.

Amazon has deployed its millionth robot, with DeepFleet AI coordinating the entire fleet and improving warehouse travel efficiency by 10%. BMW factories now have cars driving themselves through kilometer-long production routes without human assistance.

15. Autonomous Vehicles

Autonomous vehicles use sensors, cameras, lidar, radar, and AI algorithms to navigate without human input. The SAE defines five levels of autonomy, from driver assistance (Level 1) to full automation (Level 5) where no driver is required.

Learn More: Study the technology behind self-driving cars with the Self-Driving Cars Specialization.

16. Drones and Unmanned Aerial Vehicles (UAVs)

Drones are transforming industries from construction to agriculture, logistics, and emergency response. They enable aerial photography, mapping and surveying, environmental monitoring, and package delivery at unprecedented scale and efficiency.

For construction professionals, learn how to become a drone pilot and explore how drones are changing the construction industry. Develop robotics skills with the Robotics: Aerial Robotics course.

17. Digital Twin Technology

Digital twins create virtual representations of physical objects or systems, enabling simulation and performance analysis. The technology integrates IoT, big data analytics, cloud computing, and 3D modeling to predict real-world behavior.

In construction, digital twins revolutionize project planning, design optimization, and facility management. Explore our deep dive on Digital Twin technology in construction and real estate.

18. Virtual Reality and Augmented Reality (VR/AR)

Extended Reality (XR) technologies merge physical and virtual worlds. Virtual Reality creates immersive simulated environments, while Augmented Reality overlays digital information on the real world. CES 2026 marked smart glasses going mainstream, with major releases from Meta, Asus, RayNeo, and others.

Construction applications include Virtual Reality in construction for design visualization and Augmented Reality in construction for on-site guidance. Learn the fundamentals with Introduction to XR Technologies.

19. Smart Cities and Smart Homes

Smart city technology uses IoT, data analytics, and cloud computing to improve urban services including transportation, energy, waste management, and public safety. Smart home technology creates efficient, comfortable, secure living environments through connected devices.

Explore Smart Home Systems for residential applications. Build expertise with the Smart Cities course.

20. Solid-State Batteries and Next-Gen Energy Storage

Solid-state batteries replace liquid electrolytes with solid materials, improving energy density and safety. At CES 2026, Verge Motorcycles announced the world’s first solid-state battery production vehicle, promising 370 miles on a charge with 10-minute fast charging.

This technology enables faster charging, longer battery life, and reduced fire risks in electric vehicles and consumer electronics. Semi-solid state batteries are also emerging for smartphones, offering improved safety and longevity.

Section 5: Future Technologies

21. Quantum Computing Stabilization

Quantum computing uses quantum-mechanical phenomena like superposition and entanglement to perform operations impossible for classical computers. According to IBM, quantum advantage is likely to emerge by the end of 2026—the point where quantum computers solve problems with demonstrable improvement over classical methods.

Advances in error correction are making mid-scale quantum computers more reliable. Applications include optimization problems, cryptography, drug discovery, and financial modeling. Organizations that are quantum-ready are three times more likely to belong to multiple technology ecosystems.

22. Natural Language Processing (NLP)

Natural Language Processing enables computers to understand, generate, and interpret human language. Applications include translation, sentiment analysis, text summarization, question answering, and conversational AI.

NLP powers the chatbots and virtual assistants that are revolutionizing customer service across industries. The technology combines rule-based, statistical, and machine learning techniques to analyze natural language data.

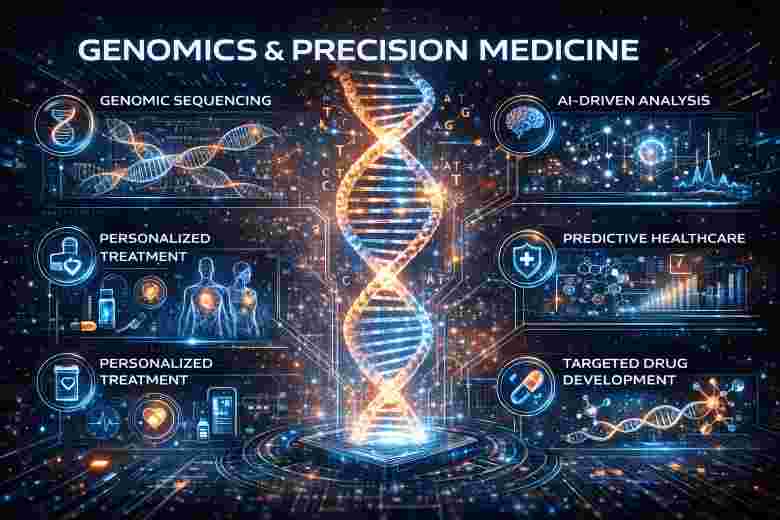

23. Genomics and Precision Medicine

Genomics uses DNA sequencing and genome editing to study genetic makeup, while precision medicine tailors treatments to individual patients based on their genetics. This technology is improving diagnosis, treatment, and prevention of diseases.

Applications span oncology, neurology, cardiology, and psychiatry. The field offers potential to reduce healthcare costs by eliminating ineffective treatments. Explore Genomic Data Science Specialization for career development.

24. Synthetic Biology and Biomanufacturing

Synthetic biology engineers microorganisms to produce pharmaceuticals, enzymes, and materials at scale. This approach lowers production costs while reducing environmental impact, offering renewable, carbon-neutral production pathways.

The convergence of biology and manufacturing enables new sustainable materials, food systems, and industrial processes. Companies like Checkerspot are engineering algae to create high-performance, bio-based materials.

25. Web3 and the Evolving Metaverse

Web3 represents the next internet phase characterized by decentralized networks, blockchain technologies, and greater user data ownership. The Metaverse creates immersive virtual environments where users can meet, work, and collaborate.

While full metaverse adoption remains gradual, the underlying technologies continue advancing. Organizations are exploring virtual collaboration spaces, digital twins of physical locations, and new forms of customer engagement.

Key Takeaways for 2026

The technology landscape of 2026 is defined by execution rather than experimentation. As Deloitte summarizes, the organizations that succeed will not be those with the most sophisticated technology, but those with the courage to redesign rather than automate, the discipline to connect every investment to business outcomes, and the velocity to execute before the window closes.

For construction professionals, these technologies are not abstract—they are reshaping how projects are designed, built, and managed. From digital construction management to connected construction sites, the industry is undergoing rapid digital transformation.

The gap between technology leaders and laggards grows exponentially. How you respond determines which side of that gap you are on. Start building future-proof skills today.

Related Posts:

- Decoding the Future: How Quantum Computing Could Revolutionize Construction

- What are the emerging fields in engineering that have a high demand for jobs in India and globally?

- Essential Computer Skills for Civil Engineers in 2026

- Human engineering skill – will it be replaced by computers?

- The Convergence of Civil Engineering and Computer Science

Frequently Asked Questions (FAQs)

What is the most trending technology in 2026?

Agentic AI and multi-agent systems are the most significant technology trends of 2026. These systems can independently plan, execute, and refine workflows, moving AI beyond simple task automation to autonomous operations.

What is the AI market size projected for 2026?

The global AI market is projected to reach $312 billion in 2026, growing at approximately 27.7% annually. By 2030, the market is expected to reach $827 billion to $1.8 trillion, depending on the research source.

What are the top 5 emerging technologies for 2026?

The top five emerging technologies for 2026 are: (1) Agentic AI and multi-agent systems, (2) Physical AI with embodied robotics, (3) Quantum computing reaching practical advantage, (4) Solid-state battery technology, and (5) Preemptive AI-powered cybersecurity.

How is AI impacting the construction industry in 2026?

AI is transforming construction through automated design generation, predictive maintenance, safety monitoring, project scheduling optimization, and quality control. Technologies like digital twins, drones, and IoT sensors are creating connected construction sites with real-time data analytics.

What skills should I develop to stay relevant with these technology trends?

Focus on AI and machine learning fundamentals, data analytics, cloud computing, cybersecurity basics, and domain-specific AI applications in your industry. Soft skills including adaptability, critical thinking, and human-AI collaboration are equally important.

When will quantum computing become practically useful?

IBM research indicates that quantum advantage—where quantum computers solve problems demonstrably better than classical computers—is likely to emerge by the end of 2026. Initial applications will focus on optimization problems, cryptography, and pharmaceutical research.